The AI Singularity in Mathematics: How Artificial Intelligence is Reshaping Discovery and Education

The summer of 2025 marked a watershed moment in the field of mathematics. The International Mathematical Olympiad, a prestigious annual competition that challenges the brightest high school students globally, witnessed an unprecedented event: artificial intelligence models successfully solved five out of six complex problems. While the mathematical community expressed astonishment, having not anticipated such rapid advancement in AI’s problem-solving capabilities, the implications of these results extended far beyond mere puzzle-solving. Olympiad problems, though intricate, are characterized by their known answers, unlike the open-ended challenges that define cutting-edge mathematical research.

This unexpected display, however, did not go unnoticed. Mathematicians who had previously dismissed AI as too unreliable for serious academic pursuits began to explore its potential. To their surprise, these early adopters discovered that AI models were not only adept at solving complex puzzles but also capable of contributing to genuine research breakthroughs. The efficiency gains were staggering; tasks that once consumed weeks or months of human effort could now be accomplished in mere days. As Terence Tao, a distinguished mathematician at the University of California, Los Angeles, aptly stated, "2025 was the year when AI really started being useful for many different tasks."

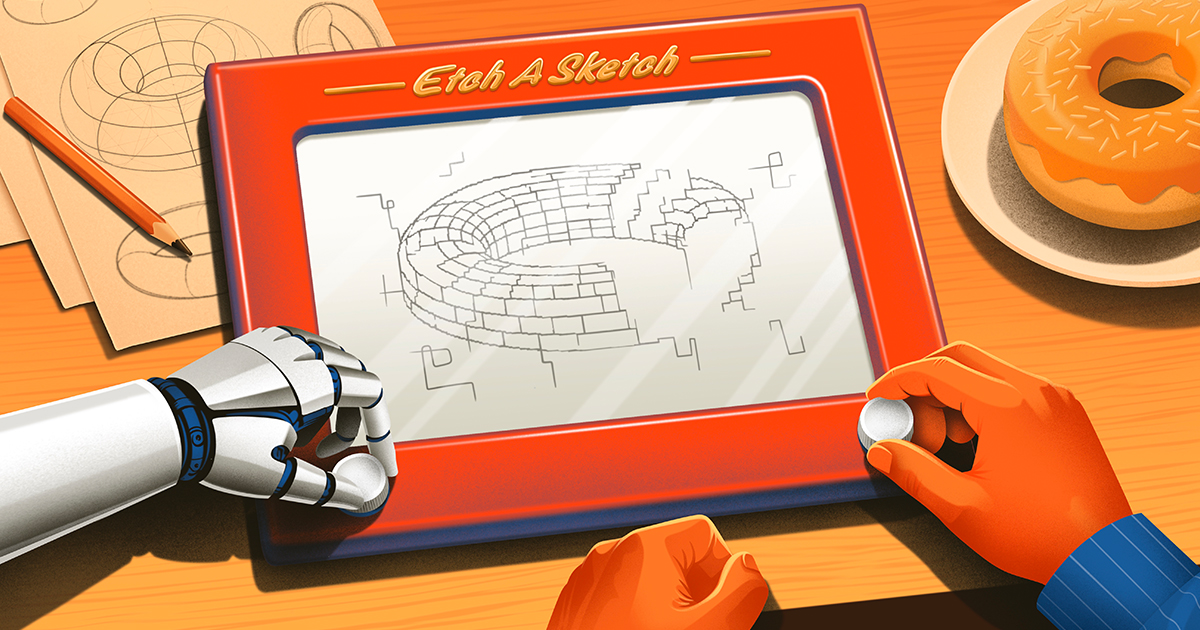

While no single AI-generated discovery immediately heralded a world-altering paradigm shift, some of these new results attained a level of rigor and novelty comparable to those published in professional mathematical journals. In some instances, algorithms autonomously formulated conjectures, devised proofs, and rigorously verified them with minimal human oversight. In other scenarios, extensive dialogue with advanced large language models (LLMs) like ChatGPT, Claude, and Gemini proved instrumental in uncovering novel proof strategies. Tao further illustrated this collaborative dynamic, likening it to having "a shovel and a pickaxe together" to "bore a tunnel," emphasizing the iterative and experimental nature of this new research paradigm, characterized by "a lot of throwing things at the wall to see what sticks."

Daniel Litt of the University of Toronto concurred, observing that even by excelling at foundational problems, AI is fundamentally "changing how mathematics is done." Tao elaborated on this transformation, predicting that the practice of mathematics will "look and feel altogether different from the way mathematics was traditionally done." He highlighted a shift from studying individual problems to an approach that allows for the simultaneous analysis of thousands of problems, paving the way for sophisticated statistical studies. Although a consensus exists that AI will not replace mathematicians, Tao acknowledged the necessity of significant "institutional changes [and] cultural changes" to adapt to this evolving landscape.

The Shifting Landscape of Mathematical Research

The integration of AI into mathematics is not without its challenges and debates. Akshay Venkatesh of the Institute for Advanced Study, a Fields Medal recipient like Tao, voiced concerns that over-reliance on AI could diminish mathematicians’ direct engagement with fundamental mathematical understanding. While both scholars foresee a profound impact, Venkatesh champions the preservation of certain "valuable things in our culture." This sentiment underscores a broader academic struggle to balance technological advancement with the enduring principles of intellectual inquiry.

The allure of AI’s potential has also prompted a notable exodus of mathematicians from academia to the corporate world. Prominent tech firms such as OpenAI and Google, along with burgeoning AI-focused startups like Harmonic, Logical Intelligence, Axiom Math, and Math Inc., are actively recruiting mathematical talent. Jeremy Avigad, director of Carnegie Mellon University’s Institute for Computer-Aided Reasoning in Mathematics, explained this trend: "One reason there is so much interest in AI for mathematics in the corporate world is that people are recognizing that the key to general intelligence is combining the insights you get from machine learning and the precision you get from mathematics."

By early 2026, the initial shock surrounding AI’s computational prowess had evolved into a sense of wonder. A February challenge, "First Proof," offered participants a week to deploy their AI models to tackle ten research-level mathematical questions across diverse fields. These problems were deliberately chosen to minimize the likelihood of their appearance in the models’ training data. Demonstrating varying degrees of autonomy, the AI systems successfully resolved more than half of the posed challenges. If the Olympiad results signaled AI’s entry into advanced undergraduate mathematics, the "First Proof" outcomes marked its graduation to a postgraduate level. Litt aptly summarized the significance in a blog post, asserting, "It’s very likely that this technology is bigger than the computer."

A Creative Evolution: From Niche Exploration to Research Collaboration

The transformative capabilities of AI in mathematics, exemplified by the events of summer 2025, did not emerge overnight. DeepMind, a leading AI research lab, had been pursuing mathematical problem-solving with AI since 2018, according to Pushmeet Kohli, its vice president of science. François Charton, now with Axiom, began experimenting with machine learning for mathematical problems as early as 2019.

In these nascent stages, AI in mathematics was largely a niche pursuit. Charton and a small group of researchers initially focused on verifying known solutions using AI, primarily to test the efficacy of new techniques. By 2024, the focus began to shift towards pioneering new ground. Researchers started identifying problems with abundant data, leveraging AI to construct mathematical objects with quantifiable properties, such as optimal arrangements of points on a grid that avoid forming isosceles triangles.

A significant collaborative effort materialized in January 2025 when Terence Tao, alongside Javier Gómez-Serrano of Brown University, partnered with DeepMind researchers Adam Wagner and Bogdan Georgiev. Their project, AlphaEvolve, employed Gemini to generate extensive Python code. This code was then refined through genetic algorithms, a process akin to biological evolution, to discover optimal solutions to mathematical problems. Over several months, the team applied AlphaEvolve to a new problem daily or bi-daily.

This iterative process also yielded insights into optimizing prompts for AlphaEvolve. Gómez-Serrano noted that the model seemed to respond positively to encouragement, stating, "It worked better when we were prompting with some positive reinforcement to the LLM. Like saying ‘You can do this’ – this seemed to help. This is interesting. We don’t know why."

By late May, AlphaEvolve had been tested on 67 distinct mathematical problems. It achieved modest improvements on 23 of these, matched existing results on 36, and fell short on a handful. Their findings were published in November 2025 in a paper titled "Mathematical Exploration and Discovery at Scale." Gómez-Serrano remarked that while individual results might have been attainable by human experts over extended periods, their team, lacking specialized expertise across all fields, achieved comparable outcomes within days.

Tao characterized current AI models as highly effective at identifying "low-hanging fruit" within vast problem sets – tasks that are tedious and often unappealing to humans. He cautioned, however, that these successes are often interspersed with a "big sea of unreported failures." Nevertheless, the notable achievements underscore AI’s growing utility. Gómez-Serrano estimates that AI now occupies two-thirds of his research time, signaling a profound shift: "It is getting to the point where it is useful and usable. This is the beginning of the new way we will do mathematics."

Mistaken Identities: The Emergence of AI as a Conversational Partner

In earlier years, AI’s strength in mathematics often lay in its ability to unearth obscure, long-forgotten proofs from vast repositories of literature. Igor Pak of UCLA observed that ChatGPT excelled at identifying relevant references and literature, making connections that even sophisticated search engines like Google Scholar, lacking semantic understanding, could not.

However, a significant shift occurred throughout 2025. Johannes Schmitt of ETH Zurich noted that LLMs began to prove valuable not just for providing complete answers but as effective "conversation partners." While these models were prone to errors, leading some mathematicians to disregard them, others, like Schmitt, adopted a more tolerant approach. He explained, "They say, I can still get something out of this conversation; even if not every idea is good, I can ignore the bad ones and take the good ones." Schmitt also pointed out the peculiar nature of AI’s errors: they often involved basic mistakes that a trained mathematician would rarely make, even while concurrently producing subtle, original, and correct insights.

Ernest Ryu, an applied mathematician specializing in optimization theory at UCLA, also began to pay closer attention to LLMs after the Olympiad results. While systems like AlphaEvolve focused on optimizing specific quantities, Ryu was interested in proving conditions under which optimization algorithms function. In the summer of 2025, he observed a dramatic improvement in the mathematical capabilities of LLMs. He began using them to assist in preparing lecture notes, particularly to fill in gaps in his memory of proof details. At times, he noted, the AI "would find an error in my reasoning, sometimes major, sometimes minor. Sometimes it would find a simpler proof than I had in my notes."

Ryu sensed that AI models were "exhibiting signs of life." Skeptical yet optimistic, he decided to test their limits. In October, he embarked on solving an open problem in optimization theory that had previously eluded him. This time, he enlisted ChatGPT. The problem, first posed by Yurii Nesterov in 1983, concerned finding the minimum of functions with multiple variables, ensuring convergence to the lowest point without oscillating indefinitely. This concept is fundamental to applied mathematics, particularly in machine learning for training neural networks, where techniques like gradient descent are employed. While gradient descent guarantees convergence, its speed can be a limitation, prompting the search for more efficient variations. Nesterov’s technique aimed to accelerate convergence by making step sizes dependent on the path taken. However, proving its guaranteed convergence had remained an elusive challenge for 42 years.

Ryu’s interaction with ChatGPT yielded a series of "incorrect proofs," yet the preliminary steps often contained "interesting steps, correct partial results that seemed potentially useful." He adopted an iterative approach, verifying the AI’s outputs, retaining correct segments, and feeding them back into the model with refined prompts. "I had to play the role of the verifier," Ryu stated. "With ChatGPT, I felt like I was covering a lot of ground very rapidly, much more quickly than I could do on my own. That’s what kept me going."

Within approximately 12 hours of work spread over three days, Ryu successfully proved a simplified version of the problem. A few days later, he achieved a complete proof of Nesterov’s method’s convergence. While not a groundbreaking discovery, Ryu deemed it a "good result" publishable in a top optimization journal, even without the AI component. He emphasized that ChatGPT "really accelerated the discovery" and predicted a future of "really, really impressive, substantial discoveries assisted by AI." Ryu’s newfound conviction led him to take a leave of absence from UCLA to join OpenAI as a member of their technical staff.

Order in the Court: AI Uncovers Hidden Structures

Throughout 2025 and into early 2026, AI was increasingly employed to prove highly abstract mathematical results. In September 2025, over a hundred mathematicians convened at Brown University for a program on algebraic combinatorics. Among them were Nicolás Libedinsky and David Plaza from Chile, José Simental from Mexico, Geordie Williamson from Australia, and Jordan Ellenberg from Wisconsin, all interested in computing a quantity known as the d-invariant. This invariant plays a crucial role in various mathematical domains.

Understanding the d-invariant requires an appreciation for the structure of permutation groups, which describe the ways to reorder a set of elements. For instance, the permutation group S3, representing the shuffling of three items, has six possible arrangements. These arrangements can be visualized as a graph where edges signify swaps. As the number of items (n) increases, the complexity of Sn grows exponentially, making graphical representation infeasible for larger groups. Mathematicians study these graphs to understand their inherent structure and their applications in analyzing other mathematical objects.

The d-invariant, in essence, quantifies the complexity of the "Bruhat interval" between two permutations within these graphs – the set of permutations reachable by following the graph’s arrows. While calculating this invariant for larger permutation groups is notoriously difficult, Libedinsky, Simental, Plaza, Williamson, and Ellenberg, each seeking to maximize the d-invariant for specific groups, turned to AI for assistance.

In October 2025, Ellenberg enlisted Adam Wagner at DeepMind to utilize AlphaEvolve, a proprietary system, to analyze the Bruhat intervals of numerous permutation groups. The AI’s overnight analysis yielded intriguing results, prompting a flurry of communication among the researchers. The AI, in its internal processes, even mused about proposing a "truly outlandish" strategy, referencing a naval maneuver from Tom Clancy’s novel "The Hunt for Red October."

AlphaEvolve generated approximately 50 lines of Python code in its pursuit of intervals with large d-invariants. As the mathematicians delved into the code, Ellenberg discovered a significant simplification: when the number of items was a power of two, the program reduced to a mere five lines of code, revealing a "very beautiful" and "very explicit" underlying structure.

In a preprint released on January 3, 2026, the team reported that AlphaEvolve had uncovered a surprisingly special structure within these specific permutation groups: their Bruhat intervals formed higher-dimensional cubes known as hypercubes. Libedinsky expressed astonishment, stating, "If it was a human, it would be an extremely creative human." AlphaEvolve had revealed a "gigantic hypercube which we had not anticipated was there," answering a question they hadn’t even thought to ask. Williamson remarked that this structure "had been sitting there for 50 years in front of our nose. We just hadn’t noticed it."

While older machine learning techniques had also facilitated serendipitous discoveries, they required substantial engineering effort and specialized expertise. With LLMs, Williamson observed, "I can suddenly do an experiment in 20 minutes that two years ago would have taken me two weeks." This newfound accessibility allows for unprecedented exploration, promising to "discover the world that has riches beyond our imagination."

Around the Sphere: AI in Algebraic Geometry

Bruhat intervals, though combinatorial in nature, also hold significant importance in algebraic geometry, a field of abstract mathematics studied by Ravi Vakil at Stanford University, the current president of the American Mathematical Society. Algebraic geometry focuses on shapes defined by polynomial equations. Vakil and his colleagues, Balázs Elek of the University of New South Wales and Jim Bryan of the University of British Columbia, were investigating how spheres can be embedded within complex spaces known as flag varieties.

Each embedding, or mapping of points from a sphere to a flag variety, can be represented by a polynomial equation. Mathematicians visualize these embeddings as points in a high-dimensional space, analyzing those defined by polynomials of varying degrees. They sought to understand how these spaces evolve as the degree of the polynomials increases. Their observations suggested that as the degree approaches infinity, the space converges to the space of all continuous embeddings. However, they were surprised to find that this resemblance emerged much earlier than anticipated, with "some consistency that was not supposed to happen until you reached infinity, and it already happened."

To rigorously prove this phenomenon, Vakil and his team collaborated with Freddie Manners and George Salafatinos, then at DeepMind, utilizing specialized modules built upon Google Gemini. Their initial focus was on a simpler case. The AI produced an "elegant, correct, beautifully written" proof that clarified a previously obscure structure, leading to the realization of a potential generalization. The team then presented the AI with a sketch of the general proof, requesting it to fill in the details. Their findings, published in a preprint on January 12, 2026, confirmed the AI’s success.

Vakil reflected on the significance of the AI’s proof for the simpler case, stating, "The clarity of the argument gave us a new idea." This raised a fundamental question of attribution: "Who is that idea due to? Is it due to us? Is it due to the model?" While Vakil believes he might have eventually arrived at the proof given sufficient time, he acknowledged the possibility of a more convoluted approach and conceded that the paper might not have materialized without AI assistance. He concluded, "AI models will help us do mathematics by letting us do things we did not have time to do before." This interaction exemplifies AI’s current utility: enabling expert mathematicians to achieve results faster and with greater certainty, as proofs can be meticulously verified.

All Ye Need to Know: Challenges and the Future of Mathematical Learning

The increasing capabilities of AI in mathematics also present significant challenges. Daniel Litt cautioned against the proliferation of "AI-generated nonsense," and Joel David Hamkins of the University of Notre Dame expressed concern about an "ocean of slop that is overwhelming our journal systems." This concern highlights the growing reliance on formal proof verification, where proofs are translated into a computer-readable format to ensure logical accuracy. Terence Tao stressed, "AI without validation is too unreliable to be of use in any serious application."

The process of formalizing mathematical proofs is currently labor-intensive and requires considerable mathematical expertise. The advent of "autoformalization," where AI models translate mathematical statements into formal logic and generate proofs, offers a promising solution. Tao believes that for the first time, "we could formalize a significant fraction of mathematics through AI."

A more immediate concern for many mathematicians is the impact of AI on mathematical education. Even proponents of AI express apprehension. Ken Ono, a professor at the University of Virginia and founding mathematician at Axiom, voiced deep concern about "the role of AI in the future of work and training at all levels." Tao echoed this sentiment, noting that AI’s ability to instantly solve many assigned problems could "discourage a lot of the students from building up their mental muscles."

Hamkins has found it increasingly difficult to assign homework, as a substantial portion is now AI-generated. He stated, "I don’t want to read it. I don’t want to be the AI cop." This has necessitated a shift towards in-class assessments, posing a challenge for the entire academic profession. Another mathematician at a leading research university observed, "There is a serious risk that, in parallel with accelerating the progress of serious mathematical researchers, AI prevents us from making more mathematical researchers."

Despite these rapid advancements, mathematicians generally do not foresee the obsolescence of their discipline. Tao uses the analogy of climbing a mountain range: humans can plan strategic routes to summits like Everest, while current AIs, like "jumping robots," can ascend small walls but lack long-term strategic planning capabilities. He believes AI is "nowhere near the Mount Everests of math." Igor Pak remains skeptical of AI’s ability to tackle certain monumental problems in number theory, suggesting they will remain unresolved for centuries, but expresses confidence in humanity’s eventual discovery of their solutions.

The trajectory of AI development remains uncertain, with few signs of stagnation. Litt notes, "Things are moving very fast. I don’t see any sign they are slowing down." The early months of 2026 have witnessed a continuous stream of new AI-driven mathematical results from both major corporations and smaller entities, as well as from academics and hobbyists. Litt predicts, "My expectation is surely in 20 years we are going to see AI tools generating mathematics that in many measurable ways are better than every human mathematician."

However, Akshay Venkatesh reminds us that "there are infinitely many ways to formulate any piece of math." The choices made in this formulation are guided by human values and the inherent artistic nature of mathematics. He fears that if AI pushes mathematics away from its artistic heritage, the discipline will be diminished, even if theorem discovery accelerates.

The greatest hope for AI in mathematics lies in its potential to assist humans in finding and proving theorems that might otherwise remain elusive. This echoes the impact of computers over the past 80 years. However, the scale of current changes has unsettled many. At the 2026 Joint Mathematics Meetings, nervous jokes about AI’s potential to render mathematicians obsolete were common. Geordie Williamson, a proponent of AI in mathematics, urged against reacting with ignorance and fear, yet acknowledged the underlying anxiety about the potential diminishment of a "craft that people have spent their lives – dedicated their lives – towards." The future of mathematics, while undeniably intertwined with AI, will likely be shaped by a delicate balance between computational power and enduring human creativity and values.